27th March 2009. It's now a year since I wrote this post. Thanks to some comments by Janus2007 I've realised that it needs updating. I've replaced the code with the current version of the Suteki Shop LINQ generic repository. There are a number of changes in the way it works. The most obvious, and one that I should have updated a long time ago is the GetAll method returns an IQueryable<T> rather than an array. I actually changed this soon after I wrote the post, but totally forgot about the naive implementation given here.

The other major change is marking the SubmitChanges methods as obsolete. Jeremy Skinner, who has been doing some excellent work on Suteki Shop has pushed this change. UoW (DataContext) management is now handled by attributes on action methods.

Please have a look at the Suteki Shop code to see the generic repository in action:

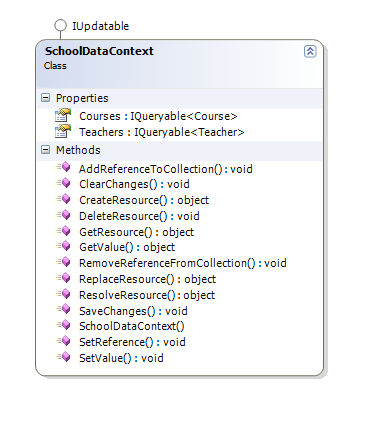

LINQ to SQL is a quantum leap in productivity for most mainstream .NET developers. Some folks may have been using NHibernate or some other ORM tool for years, but my experience in a number of .NET shops has been that the majority of developers still hand code their data access layer. LINQ is going to bring some fundamental changes to the way we architect our applications. Especially being able to write query style syntax directly in C# against both a SQL Server database and against in memory object graphs begs some interesting questions about application architecture.

So where is our point of separation between data access and domain? Surely I'm not recommending that we abandon a layered architecture and write all our data access directly into our domain classes?

My current project is based on the new MVC Framework. I've been using LINQ to SQL for data access as well as an IoC container (Windsor) and NUnit plus Rhino Mocks for testing. For my data access layer I've used the IRepository pattern popularized by Ayende in his excellent MSDN article on IoC and DI. My Repository looks like this:

using System;

using System.Linq;

using System.Linq.Expressions;

using System.Data.Linq;

using Suteki.Common.Extensions;

namespace Suteki.Common.Repositories

{

public interface IRepository<T> where T : class

{

T GetById(int id);

IQueryable<T> GetAll();

void InsertOnSubmit(T entity);

void DeleteOnSubmit(T entity);

[Obsolete("Units of Work should be managed externally to the Repository.")]

void SubmitChanges();

}

public interface IRepository

{

object GetById(int id);

IQueryable GetAll();

void InsertOnSubmit(object entity);

void DeleteOnSubmit(object entity);

[Obsolete("Units of Work should be managed externally to the Repository.")]

void SubmitChanges();

}

public class Repository<T> : IRepository<T>, IRepository where T : class

{

readonly DataContext dataContext;

public Repository(IDataContextProvider dataContextProvider)

{

dataContext = dataContextProvider.DataContext;

}

public virtual T GetById(int id)

{

var itemParameter = Expression.Parameter(typeof(T), "item");

var whereExpression = Expression.Lambda<Func<T, bool>>

(

Expression.Equal(

Expression.Property(

itemParameter,

typeof(T).GetPrimaryKey().Name

),

Expression.Constant(id)

),

new[] { itemParameter }

);

return GetAll().Where(whereExpression).Single();

}

public virtual IQueryable<T> GetAll()

{

return dataContext.GetTable<T>();

}

public virtual void InsertOnSubmit(T entity)

{

GetTable().InsertOnSubmit(entity);

}

public virtual void DeleteOnSubmit(T entity)

{

GetTable().DeleteOnSubmit(entity);

}

public virtual void SubmitChanges()

{

dataContext.SubmitChanges();

}

public virtual ITable GetTable()

{

return dataContext.GetTable<T>();

}

IQueryable IRepository.GetAll()

{

return GetAll();

}

void IRepository.InsertOnSubmit(object entity)

{

InsertOnSubmit((T)entity);

}

void IRepository.DeleteOnSubmit(object entity)

{

DeleteOnSubmit((T)entity);

}

object IRepository.GetById(int id)

{

return GetById(id);

}

}

}

As you can see, this generic repository insulates the rest of the application from the LINQ to SQL DataContext and provides basic data access methods for any domain class. Here's an example of it being used in a simple controller.

using System.Web.Mvc;

using Suteki.Common.Binders;

using Suteki.Common.Filters;

using Suteki.Common.Repositories;

using Suteki.Common.Validation;

using Suteki.Shop.Filters;

using Suteki.Shop.Services;

using Suteki.Shop.ViewData;

using Suteki.Shop.Repositories;

using MvcContrib;

namespace Suteki.Shop.Controllers

{

[AdministratorsOnly]

public class UserController : ControllerBase

{

readonly IRepository<User> userRepository;

readonly IRepository<Role> roleRepository;

private readonly IUserService userService;

public UserController(IRepository<User> userRepository, IRepository<Role> roleRepository, IUserService userService)

{

this.userRepository = userRepository;

this.roleRepository = roleRepository;

this.userService = userService;

}

public ActionResult Index()

{

var users = userRepository.GetAll().Editable();

return View("Index", ShopView.Data.WithUsers(users));

}

public ActionResult New()

{

return View("Edit", EditViewData.WithUser(Shop.User.DefaultUser));

}

[AcceptVerbs(HttpVerbs.Post), UnitOfWork]

public ActionResult New(User user, string password)

{

if(! string.IsNullOrEmpty(password))

{

user.Password = userService.HashPassword(password);

}

try

{

user.Validate();

}

catch(ValidationException ex)

{

ex.CopyToModelState(ModelState, "user");

return View("Edit", EditViewData.WithUser(user));

}

userRepository.InsertOnSubmit(user);

Message = "User has been added.";

return this.RedirectToAction(c => c.Index());

}

public ActionResult Edit(int id)

{

User user = userRepository.GetById(id);

return View("Edit", EditViewData.WithUser(user));

}

[AcceptVerbs(HttpVerbs.Post), UnitOfWork]

public ActionResult Edit([DataBind] User user, string password)

{

if(! string.IsNullOrEmpty(password))

{

user.Password = userService.HashPassword(password);

}

try

{

user.Validate();

}

catch (ValidationException validationException)

{

validationException.CopyToModelState(ModelState, "user");

return View("Edit", EditViewData.WithUser(user));

}

return View("Edit", EditViewData.WithUser(user).WithMessage("Changes have been saved"));

}

public ShopViewData EditViewData

{

get

{

return ShopView.Data.WithRoles(roleRepository.GetAll());

}

}

}

}

Because I'm using an IoC container I don't have to do any more than request an instance of IRepository<User> in the constructor and because the Windsor Container understands generics I only have a single configuration entry for all my generic repositories:

<?xml version="1.0"?>

<configuration>

<!-- windsor configuration.

This is a web application, all components must have a lifesytle of 'transient' or 'preWebRequest' -->

<components>

<!-- repositories -->

<!-- data context provider (this must have a lifestyle of 'perWebRequest' to allow the same data context

to be used by all repositories) -->

<component

id="datacontextprovider"

service="Suteki.Common.Repositories.IDataContextProvider, Suteki.Common"

type="Suteki.Common.Repositories.DataContextProvider, Suteki.Common"

lifestyle="perWebRequest"

/>

<component

id="menu.repository"

service="Suteki.Common.Repositories.IRepository`1[[Suteki.Shop.Menu, Suteki.Shop]], Suteki.Common"

type="Suteki.Shop.Models.MenuRepository, Suteki.Shop"

lifestyle="transient"

/>

<component

id="generic.repository"

service="Suteki.Common.Repositories.IRepository`1, Suteki.Common"

type="Suteki.Common.Repositories.Repository`1, Suteki.Common"

lifestyle="transient" />

....

</components>

</configuration>

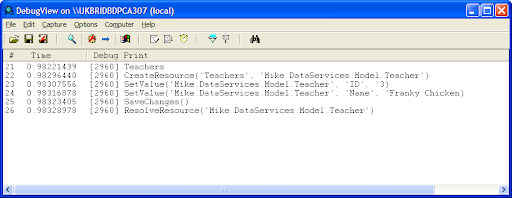

The IoC Container also provides the DataContext. Note that the DataContext's lifestyle is perWebRequest. This means that a single DataContext is shared between all the repositories in a single request.

To test my UserController I can pass an object graph from a mock IRepository<User>.

[Test]

public void IndexShouldDisplayListOfUsers()

{

User[] users = new User[] { };

UserListViewData viewData = null;

using (mocks.Record())

{

Expect.Call(userRepository.GetAll()).Return(users);

userController.RenderView(null, null);

LastCall.Callback(new Func<string, string, object, bool>((v, m, vd) =>

{

viewData = (UserListViewData)vd;

return true;

}));

}

using (mocks.Playback())

{

userController.Index();

Assert.AreSame(users, viewData.Users);

}

}

Established layered architecture patterns insulate the domain model (business objects) of a database from the data access code by layering that code into a data access layer that provides services for persisting and de-persisting objects from and to the database. This layered approach becomes essential as soon as you start doing Test Driven Development which requires you to test you code in isolation from your database.

So is LINQ data access code? I don't think so. Because the syntax for querying in-memory object graphs is identical to that for querying the database it makes sense to place LINQ queries in your domain layer. During testing, the component under test can work with in memory object graphs, but when integrated with the data access layer those same queries become SQL queries to a database.

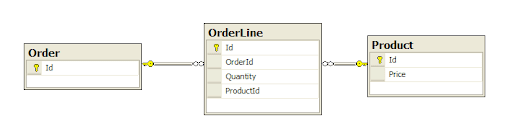

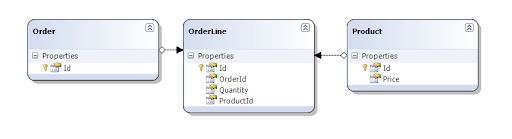

Here's a simple example. I've got the canonical Customer->Orders->OrderLines business entities. Now say I get an Customer from my IRepository<Customer>, I can then query my Customer's Orders using LINQ inside my controller:

Customer customer = customerRepository.GetById(customerId);

int numberOfOrders = customer.Orders.Count(); // LINQ

I unit test this by returning a fully formed Customer with Orders from my mock customerRepository, but when I run this code against a concrete repository LINQ to SQL will construct a SQL statement, something like:

SELECT COUNT(*) FROM Order WHERE CustomerId = 34.