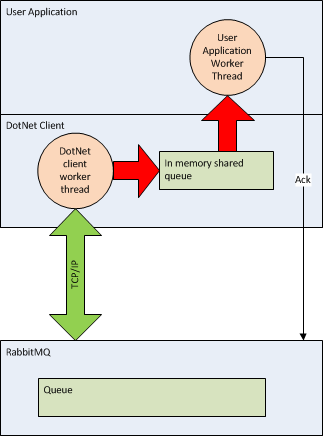

Do you ever find yourself in a loop calling a method that expects an Action or a Func as an argument? Here’s an example from an EasyNetQ test method where I’m doing just that:

[Test, Explicit("Needs a Rabbit instance on localhost to work")]

public void Should_be_able_to_do_simple_request_response_lots()

{

for (int i = 0; i < 1000; i++)

{

var request = new TestRequestMessage { Text = "Hello from the client! " + i.ToString() };

bus.Request<TestRequestMessage, TestResponseMessage>(request, response =>

Console.WriteLine("Got response: '{0}'", response.Text));

}

Thread.Sleep(1000);

}

My initial naive implementation of IBus.Request set up a new response subscription each time Request was called. Obviously this is inefficient. It would be much nicer if I could identify when Request is called more than once with the same callback and re-use the subscription.

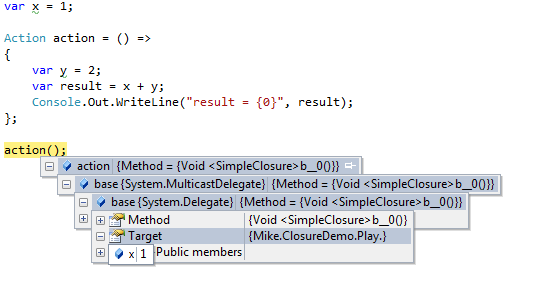

The question I had was: how can I uniquely identify each callback? It turns out that action.Method.GetHashcode() reliably identifies a unique action. I can demonstrate this with the following code:

public class UniquelyIdentifyDelegate

{

readonly IDictionary<int, Action> actionCache = new Dictionary<int, Action>();public void DemonstrateActionCache()

{

for (var i=0; i < 3; i++)

{

RunAction(() => Console.Out.WriteLine("Hello from A {0}", i));

RunAction(() => Console.Out.WriteLine("Hello from B {0}", i));

Console.Out.WriteLine("");

}

}

public void RunAction(Action action)

{

Console.Out.WriteLine("Mehod = {0}, Cache Size = {1}", action.Method.GetHashCode(), actionCache.Count);

if (!actionCache.ContainsKey(action.Method.GetHashCode()))

{

actionCache.Add(action.Method.GetHashCode(), action);

}

var actionFromCache = actionCache[action.Method.GetHashCode()];

actionFromCache();

}

}

Here, I’m creating an action cache keyed on the action method’s hashcode. Then I’m calling RunAction a few times with two distinct action delegates. Note that they also close over a variable, i, from the outer scope.

Running DemonstrateActionCache() outputs the expected result:

Mehod = 59022676, Cache Size = 0

Hello from A 0

Mehod = 62968415, Cache Size = 1

Hello from B 0

Mehod = 59022676, Cache Size = 2

Hello from A 1

Mehod = 62968415, Cache Size = 2

Hello from B 1

Mehod = 59022676, Cache Size = 2

Hello from A 2

Mehod = 62968415, Cache Size = 2

Hello from B 2

Rather nice I think :)