15Below is based in Brighton on the south coast of England, famous for it’s Regency pavilion and Victorian pier. We provide messaging and integration services for the travel industry. Our clients include Ryanair, Qantas, JetBlue, Thomas Cook and around 30 other airline and rail customers. We send hundreds of millions of transactional notifications each year to our customer’s passengers.

RabbitMQ has helped us to significantly simplify and stabilise our software. It’s one of those black boxes that you install, configure, and then really don’t have to worry about. In over a year of production we’ve found it to be extremely stable and reliable.

Prior to introducing RabbitMQ our applications would use SQL Server as a queuing mechanism. Each task would be represented by a row in a workflow table. Each process in the workflow would poll the table looking for rows that matched its status, process the rows in in a batch, and then update the rows’ status field for the next process to pick up. Each step in the process would be hosted by an application service that implemented its own threading model, often using a different approach to all the other services. This created highly coupled software, with workflow steps and infrastructure concerns, such as threading and load balancing, mixed together with business logic. We also discovered that a relational database is not a natural fit for a queuing system. The contention on the workflow tables is high, with constant inserts, selects and updates causing locking issues. Deleting completed items is also problematic on highly indexed tables and we had considerable problems with continuously growing tables.

We first introduced RabbitMQ about 18 months ago as the core infrastructure behind our Flight Status product. We wanted a high performance messaging product with a proven track record that supported common messaging patterns, such as publish/subscribe and request/response. A further requirement was that it should provide automatic work distribution and load balancing.

The need to support messaging patterns ruled out simple store-and-forward queues such as MSMQ and ActiveMQ. We were very impressed by ZeroMQ, but decided that we really needed the centralised manageability of a broker based product. This left RabbitMQ. Although support for AMQP, an open messaging standard, wasn’t in our list of requirements, its implementation by RabbitMQ made us more confident that we were choosing a sustainable strategy.

We are very much a Microsoft shop, so we had some initial concerns about RabbitMQ’s performance and stability on Windows. We were reassured by reading some accounts of RabbitMQ’s and indeed Erlang’s use on Windows by organisations with some very impressive load requirements. Subsequent experience has borne these reports out, and we have found RabbitMQ on Server 2008 to be rock solid.

As a Microsoft shop, our development platform is .NET. Although VMWare provide an AMQP C# client, it is a low-level API, not suitable for use by more junior developers. For this reason we created our own high-level .NET API for RabbitMQ that provides simple single method access to common messaging patterns and does not require a deep knowledge of AMQP. This API is called EasyNetQ. We’ve open sourced it and, with over 3000 downloads, it is now the leading high-level API for RabbitMQ with .NET. You can find more information about it at EasyNetQ.com. We would recommend looking at it if you are a .NET shop using RabbitMQ.

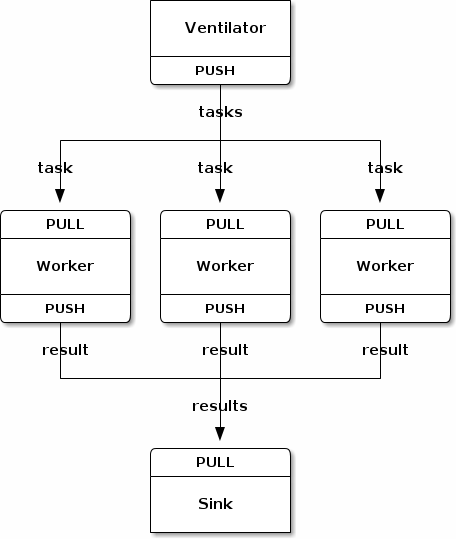

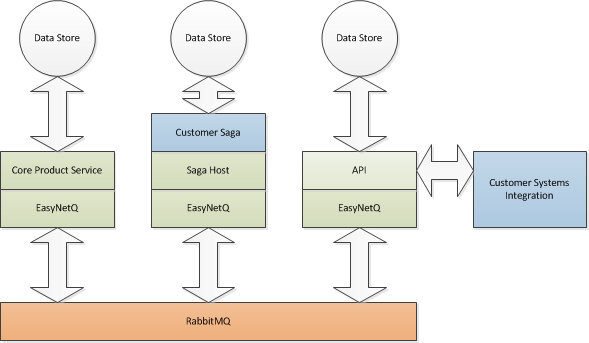

15Below’s Flight-Status product provides real-time flight information to passengers and their family and friends. We interface with the airline’s real-time flight information stream generated from their operation systems and provide a platform that allows them to apply complex business logic to this stream. We render customer tailored output, and communicate with the airline’s customers via a range of channels, including email, SMS, voice and iPhone/Android push. RabbitMQ allows us to build each piece; the client for the fight information stream, the message renderer, the sink channels and the business logic; as separate components that communicate using structured messages. Our architecture looks something like this:

The green boxes are our core product systems, the blue boxes represent custom code that we write for each customer. A ‘customer saga’ is code that models a long-running business process and includes all the workflow logic for a particular customer’s flight information requirements. A ‘core product service’ is an independent service that implements a feature of our product. An example would be the service that takes flight information and combines it with a customer defined template to create an email to be sent to a passenger. Constructing services as independently deployable and runnable applications gives us great flexibility and scalability. If we need to scale up a particular component, we simply install more copies. RabbitMQ’s automatic work sharing feature means that we can do this without any reconfiguration of existing components. This architecture also makes it easy to test each application service in isolation since it’s simply a question of firing messages at the service and watching its response.

In conclusion, RabbitMQ has provided a rock solid piece of infrastructure with the features to allow us to significantly reduce the architectural complexity of our systems. We can now build software for our clients faster and more reliably. It scales to higher loads than our previous relational-database based systems and is more flexible in the face of changing customer requirements.