A typical ASP.NET public website hosted on IIS is usually configured in such a way that the server that IIS is installed on is visible to the public internet. HTTP requests from a browser or web service client are routed directly to IIS which also hosts the ASP.NET worker process. All the functionality needed to produce the web site is embodied in a single server. This includes caching, SSL termination, authentication, serving static files and compression. This approach is simple and straightforward for small sites, but is hard to scale, both in terms of performance, and in terms of managing the complexity of a large complex application. This is especially true if you have a distributed service oriented architecture with multiple HTTP endpoints that appear and disappear frequently.

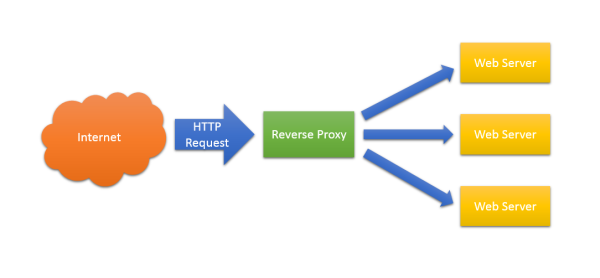

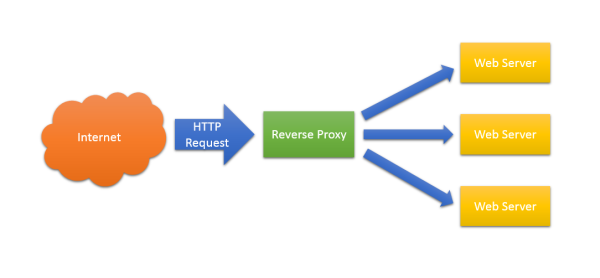

A reverse proxy is server component that sits between the internet and your web servers. It accepts HTTP requests, provides various services, and forwards the requests to one or many servers.

Having a point at which you can inspect, transform and route HTTP requests before they reach your web servers provides a whole host of benefits. Here are some:

Load Balancing

This is the reverse proxy function that people are most familiar with. Here the proxy routes incoming HTTP requests to a number of identical web servers. This can work on a simple round-robin basis, or if you have statefull web servers (it’s better not to) there are session-aware load balancers available. It’s such a common function that load balancing reverse proxies are usually just referred to as ‘load balancers’. There are specialized load balancing products available, but many general purpose reverse proxies also provide load balancing functionality.

Security

A reverse proxy can hide the topology and characteristics of your back-end servers by removing the need for direct internet access to them. You can place your reverse proxy in an internet facing DMZ, but hide your web servers inside a non-public subnet.

Authentication

You can use your reverse proxy to provide a single point of authentication for all HTTP requests.

SSL Termination

Here the reverse proxy handles incoming HTTPS connections, decrypting the requests and passing unencrypted requests on to the web servers. This has several benefits:

- Removes the need to install certificates on many back end web servers.

- Provides a single point of configuration and management for SSL/TLS

- Takes the processing load of encrypting/decrypting HTTPS traffic away from web servers.

- Makes testing and intercepting HTTP requests to individual web servers easier.

Serving Static Content

Not strictly speaking ‘reverse proxying’ as such. Some reverse proxy servers can also act as web servers serving static content. The average web page can often consist of megabytes of static content such as images, CSS files and JavaScript files. By serving these separately you can take considerable load from back end web servers, leaving them free to render dynamic content.

Caching

The reverse proxy can also act as a cache. You can either have a dumb cache that simply expires after a set period, or better still a cache that respects Cache-Control and Expires headers. This can considerably reduce the load on the back-end servers.

Compression

In order to reduce the bandwidth needed for individual requests, the reverse proxy can decompress incoming requests and compress outgoing ones. This reduces the load on the back-end servers that would otherwise have to do the compression, and makes debugging requests to, and responses from, the back-end servers easier.

Centralised Logging and Auditing

Because all HTTP requests are routed through the reverse proxy, it makes an excellent point for logging and auditing.

URL Rewriting

Sometimes the URL scheme that a legacy application presents is not ideal for discovery or search engine optimisation. A reverse proxy can rewrite URLs before passing them on to your back-end servers. For example, a legacy ASP.NET application might have a URL for a product that looks like this:

http://www.myexampleshop.com/products.aspx?productid=1234

You can use a reverse proxy to present a search engine optimised URL instead:

http://www.myexampleshop.com/products/1234/lunar-module

Aggregating Multiple Websites Into the Same URL Space

In a distributed architecture it’s desirable to have different pieces of functionality served by isolated components. A reverse proxy can route different branches of a single URL address space to different internal web servers.

For example, say I’ve got three internal web servers:

http://products.internal.net/

http://orders.internal.net/

http://stock-control.internal.net/

I can route these from a single external domain using my reverse proxy:

http://www.example.com/products/ -> http://products.internal.net/

http://www.example.com/orders/ -> http://orders.internal.net/

http://www.example.com/stock/ -> http://stock-control.internal.net/

To an external customer it appears that they are simply navigating a single website, but internally the organisation is maintaining three entirely separate sites. This approach can work extremely well for web service APIs where the reverse proxy provides a consistent single public facade to an internal distributed component oriented architecture.

So …

So, a reverse proxy can off load much of the infrastructure concerns of a high-volume distributed web application.

We’re currently looking at Nginx for this role. Expect some practical Nginx related posts about how to do some of this stuff in the very near future.

Happy proxying!